|

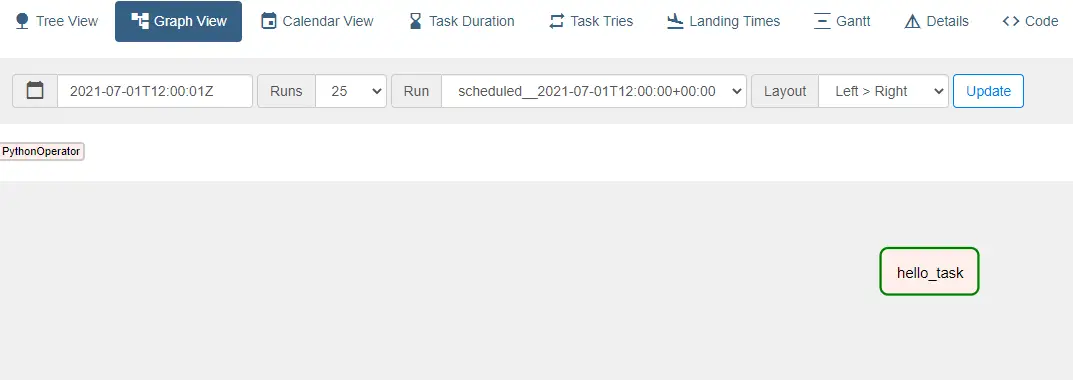

11/20/2023 0 Comments Airflow dag dependencyTaskFlow API with either Python virtual environment (since 2.0.2), Docker container (since 2.2.0), ExternalPythonOperator (since 2.4.0) or KubernetesPodOperator (since 2.4.0). If you have tasks that require complex or conflicting requirements then you will have the ability to use the Using the TaskFlow API with complex/conflicting Python dependencies ¶ In contrast, with the TaskFlow API in Airflow 2.0, the invocation itself automatically generates """ ) extract_task > transform_task > load_task doc_md = dedent ( """\ # Load task A simple Load task which takes in the result of the Transform task, by reading it from xcom and instead of saving it to end user review, just prints it out. """ ) load_task = PythonOperator ( task_id = "load", python_callable = load, ) load_task. This computed value is then put into xcom, so that it can be processed by the next task. doc_md = dedent ( """\ # Transform task A simple Transform task which takes in the collection of order data from xcom and computes the total order value. """ ) transform_task = PythonOperator ( task_id = "transform", python_callable = transform, ) transform_task. This data is then put into xcom, so that it can be processed by the next task. In this case, getting data is simulated by reading from a hardcoded JSON string. doc_md = dedent ( """\ # Extract task A simple Extract task to get data ready for the rest of the data pipeline. In Airflow 1.x, this task is defined as shown below:Įxtract_task = PythonOperator ( task_id = "extract", python_callable = extract, ) extract_task. Let’s examine this in detail by looking at the Transform task in isolation since it is It is all abstracted from the DAG developer. """ ) extract_task > transform_task > load_taskĪll of the processing shown above is being done in the new Airflow 2.0 DAG as well, but loads ( total_value_string ) print ( total_order_value ) extract_task = PythonOperator ( task_id = "extract", python_callable = extract, ) extract_task. xcom_pull ( task_ids = "transform", key = "total_order_value" ) total_order_value = json. xcom_push ( "total_order_value", total_value_json_string ) def load ( ** kwargs ): ti = kwargs total_value_string = ti. """ data_string = ' total_value_json_string = json. Documentation that goes along with the Airflow TaskFlow API tutorial is located () """ () def extract (): """ # Extract task A simple Extract task to get data ready for the rest of the data pipeline. datetime ( 2021, 1, 1, tz = "UTC" ), catchup = False, tags =, ) def tutorial_taskflow_api (): """ # TaskFlow API Tutorial Documentation This is a simple data pipeline example which demonstrates the use of the TaskFlow API using three simple tasks for Extract, Transform, and Load. Import json import pendulum from corators import dag, task ( schedule = None, start_date = pendulum. Accessing context variables in decorated tasks.Consuming XComs between decorated and traditional tasks.Adding dependencies between decorated and traditional tasks.Using the TaskFlow API with Sensor operators.Dependency separation using Kubernetes Pod Operator.Dependency separation using Docker Operator.Using Python environment with pre-installed dependencies.Virtualenv created dynamically for each task.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed